2020-10-22

Back to listA go-to choice for users to acquire information online, search engines are experiencing a shake-up from a keyword matching system to a question-answering machine. Users today have little patience to scroll through a search page, particularly when they input queries on mobile phones or ask a question on a digital personal assistant. A short, concise answer will usually suffice.

To power up search engine, researchers are studying open-domain question-answering (QA), a long-standing research discipline that teaches computers to return answers from a large corpus of documents.

We are excited to introduce RocketQA, a novel training approach to dense passage retrieval for open-domain question-answering that significantly outperforms previous state-of-the-art models on the two largest public datasets for open-domain QA derived from search engines. It has also topped the MSMARCO Passage Ranking leaderboard held by Microsoft. RocketQA is now being applied to Baidu’s core business like search and advertising, and will play a key role in many more applications.

Paper: https://arxiv.org/abs/2010.08191

Leaderboard: https://microsoft.github.io/msmarco/#ranking

Challenges of end-to-end retriever models

Traditional QA systems were usually constructed as a complicated pipeline consisting of multiple components, including question understanding, document retrieval, passage ranking and answer extraction. Over the past few years, the end-to-end training approach is gaining traction by virtue of the recent deep learning-based machine reading comprehension. End-to-end methods promise better efficiency by removing redundant modules in traditional QA pipelines and enable better performance as a whole QA system can self-learn and improve from users’ real-time feedback.

One of the most popular QA systems today is two-stage retriever-reader. A retriever model finds passages containing an answer from a large collection of documents, followed by a reader model that pinpoints the answer. In our study, we mainly focus on end-to-end retriever models.

Traditional retrievers are gradually eclipsed by the emerging dual-encoder architecture, which learns dense representations of questions and passages in an end-to-end manner. It first separately encodes questions and passages, and then computes the similarity between the dense representations using similarity functions.

However, three challenges remained for the effective training of a dual-encoder for dense passage retrieval:

l Discrepancy between training and inference for the dual-encoder retriever;

l A large number of unlabeled positives;

l High costs to acquire large-scale training data for open-domain QA.

To address these challenges and to train the dual-encoder retriever for open-domain QA effectively, we proposed RocketQA as an optimized training approach to improve dense passage retrieval and used ERNIE 2.0, a continual pre-training framework that learns pre-training tasks incrementally through constant multi-task learning as pre-trained language models.

RocketQA and its three training techniques

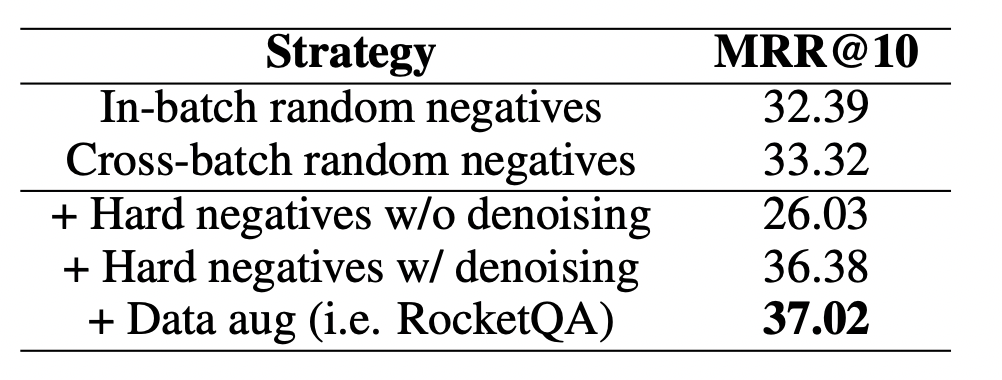

In RocketQA, we proposed three improved training strategies to address the above challenges.

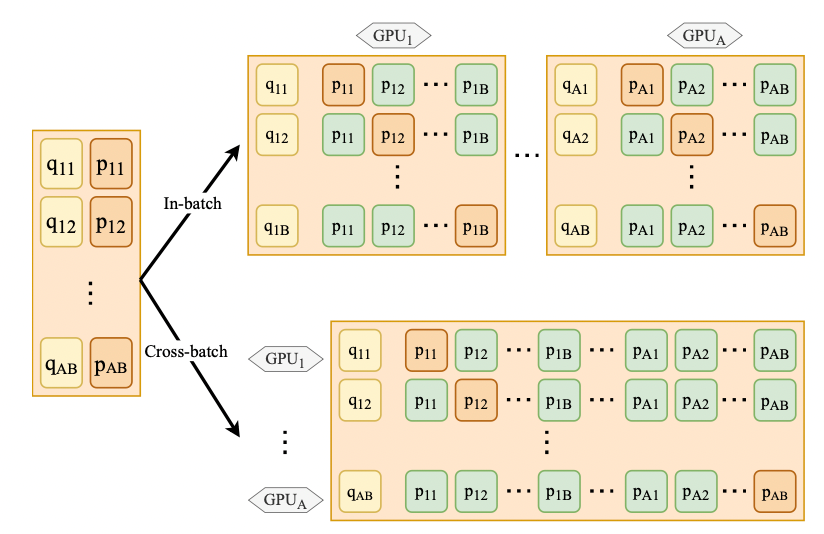

Cross-batch negatives: Previously, in-batch negatives became a common trick to generate more training pairs for QA without sampling new negatives. We optimized the training with more negatives by first computing the passage embeddings within each GPU, and then sharing these passage embeddings among all the GPUs. We collected the examples (their dense representations) from other GPUs as the additional negatives for each question.

Assume there are number B questions in a mini-batch on a single GPU and there are number A GPUs training in a data-parallel way:

l With in-batch negatives, each question can be further paired with B − 1 negatives;

l With cross-batch negatives, we can obtain A × B − 1 negatives for a given question.

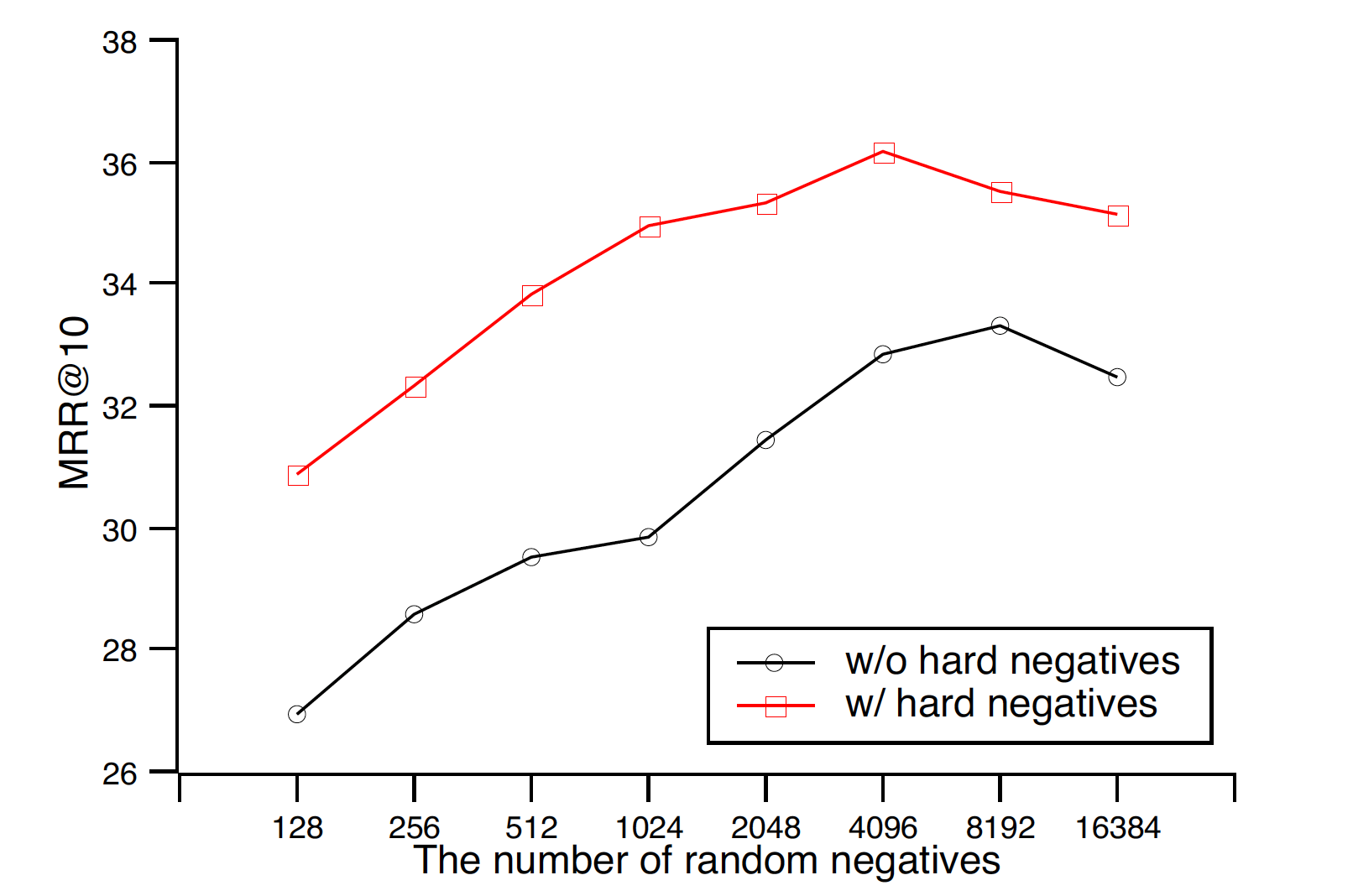

In the experiments, when the number of random negatives becomes larger, the model performance significantly increases.

Cross-batch negatives

Denoised hard negative sampling: “Hard negatives” have proven to be more critical to train a dual-encoder than an “easy negative”. Usually, the method to obtain hard negatives is to select the top-ranked passages as negative samples, which is likely to contain false negatives.

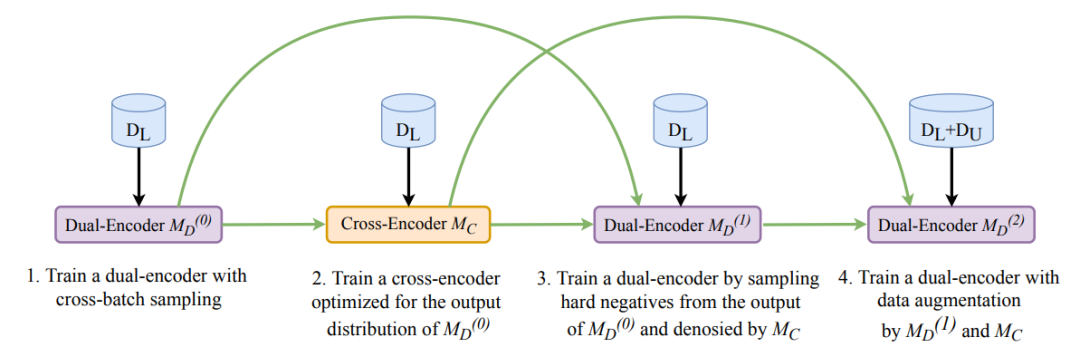

Our solution was to first train a cross-encoder based on original training data. The learned cross-encoder can measure the similarity between questions and passages. Then, when sampling hard negatives from the top-ranked passages was retrieved by a dense retriever, we removed the passages that were predicted as positives by the cross-encoder with high confidence scores. The cross-encoder architecture is more powerful in capturing two-way semantic interaction and shows much better performance than the dual-encoder architecture.

Data Augmentation: We further augmented training data by using cross-encoder to annotate unlabeled questions.

We collected 1.5 million unlabeled questions from Yahoo! Answers and ORCAS, and then used the learned cross-encoder to predict the passage labels for the new questions. To ensure the automatically labeled data quality, we only selected the predicted positive and negative passages with high confidence scores estimated by the cross-encoder. The automatically labeled data was used as augmented training data to learn the dual encoder.

The ablation study of RocketQA on MS- MARCO Passage Ranking

We organized the above three strategies into an effective training pipeline for the dual-encoder, similar to a multi-stage rocket, where the performance of the dual-encoder is consecutively improved at three steps. That is why we called our approach RocketQA.

The pipeline of the optimized training approach RocketQA

Dataset and evaluations

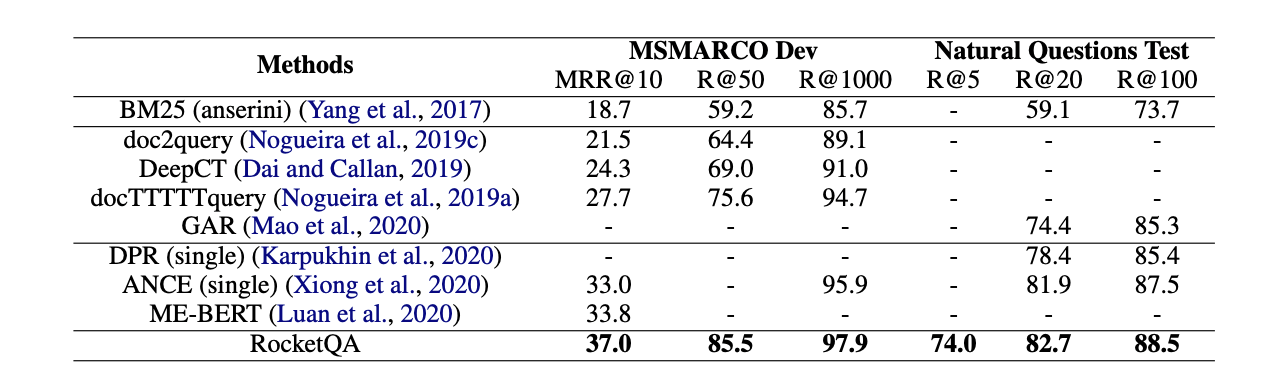

We conducted the experiments on two popular QA benchmarks: MSMARCO Passage Ranking and Natural Questions. The two datasets are constructed respectively from Bing and Google search logs. Our main experimental results showed RocketQA significantly outperforms all the baselines on both datasets, including DPR and ME-BERT.

The performance comparison on passage retrieval

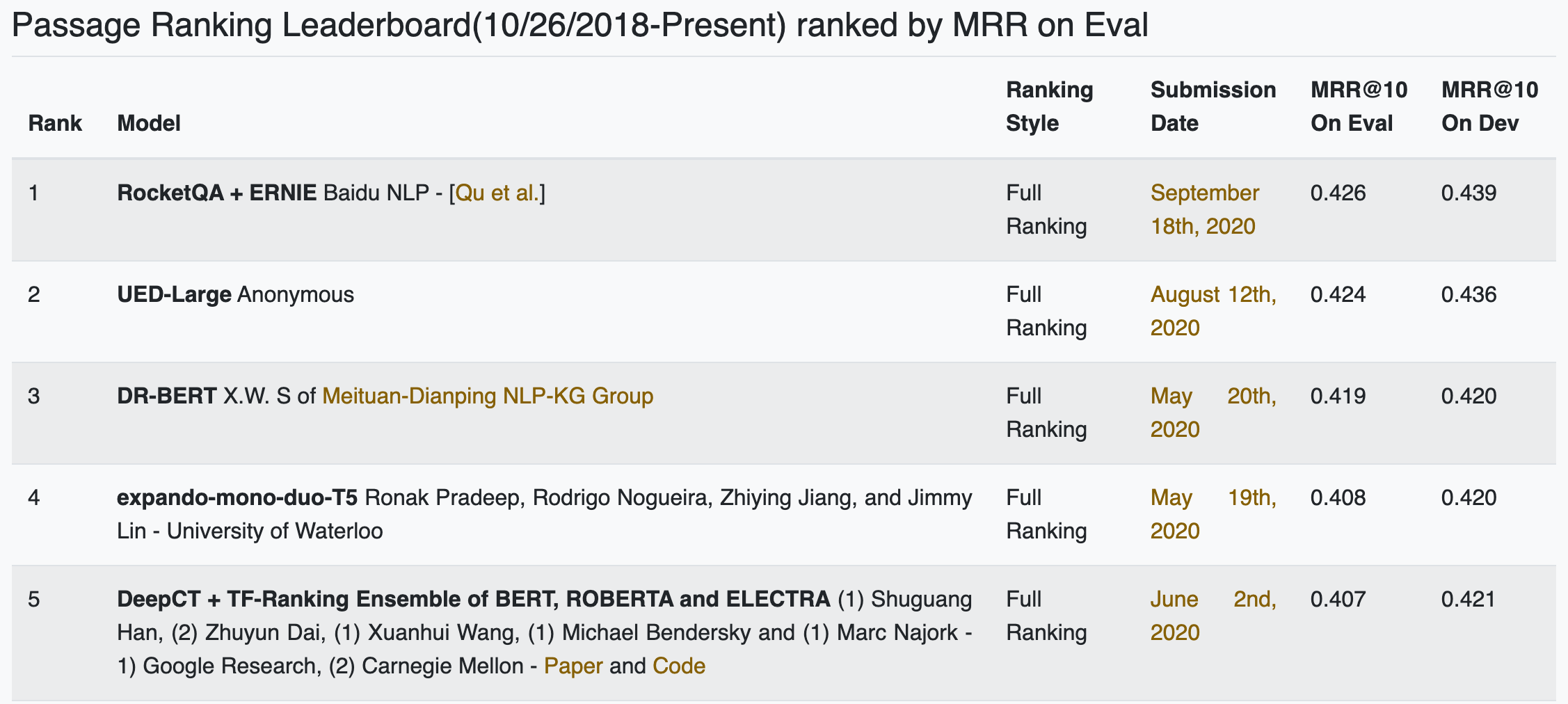

We also participated in the shared task of MSMARCO Passage Ranking held by Microsoft. The previous performance record was 42.4 on the evaluation set. The performance of our solution, presented on Sep 18, 2020, secured a new record of 42.6 for MRR@10 and took first place among 104 submissions.

MSMARCO Passage Ranking Leaderboard

Also noteworthy was that our implementation was based on FleetX, a highly scalable distributed training engine of Baidu’s deep learning platform PaddlePaddle. We used the automatic mixed precision and gradient checkpoint functionality in FleetX to train the models using large batch sizes with limited resources. The cross-batch negative sampling is implemented with differentiable all-gather operation provided in FleetX.

FleetX GitHub: https://github.com/PaddlePaddle/FleetX