2019-11-14

Back to listFeaturing 100+ models, new mobile inference engine, and frameworks for graph & federated learning

Haifeng Wang, Baidu CTO

With artificial intelligence (AI) driving tremendous transformation across industries, deep learning frameworks have become the operating system and fuel for this new intelligent era of AI-powered innovation and growth. That’s why we built and open-sourced PaddlePaddle in 2016. As a comprehensive, powerful, and easy-to-use deep learning platform, PaddlePaddle provides developers of all skill levels with the tools, services and resources they need to rapidly adopt and implement deep learning, at scale, for continuous innovation.

Since its release in 2016, PaddlePaddle has become home to more than 1.5 million developers. Today, we’re delighted to release the newest version of PaddlePaddle, which adds 21 significant new features that will improve usability, apply deep learning to a broader range of applications, and accelerate widespread AI deployments.

In an opening keynote speech at the recent Baidu Deep Learning Developer Conference Wave Summit+ 2019 on Nov. 5 in Beijing, Haifeng Wang, Baidu CTO, said that “deep learning is highly generalized, standardized, automatic, and modularized, bringing AI from laboratory to industrial scale. We will continue to open-source PaddlePaddle and drive technological development, industrial innovation and social progress together with developers.”

What’s new in PaddlePaddle:

The new release of PaddlePaddle features 21 new products and upgrades, including Paddle Lite 2.0, four development kits, three new toolkits, EasyDL Pro, and Master mode.

Paddle Lite 2.0

Maintaining low latency and high efficiency is essential when running AI applications on resource-constrained devices. We launched Paddle Lite last October as a solution to this need. Paddle Lite is tailored for inference on mobile, embedded, and IoT devices, and is compatible with PaddlePaddle and pre-trained models from other sources.

With enhanced usability in the release of Paddle Lite 2.0, developers can now deploy ResNet-50 with seven lines of code. Paddle Lite 2.0 also adds support for more hardware units such as edge-based FPGA, and enables low-precision inference using operators with the INT8 data type.

Development kits (addition of ERNIE 2.0)

To continue lowering the development threshold for low-cost and rapid model constructions, PaddlePaddle now provides four end-to-end development kits: ERNIE for semantic understanding (NLP), PaddleDetection and PaddleSeg for computer vision (CV), and Elastic CTR for recommendation.

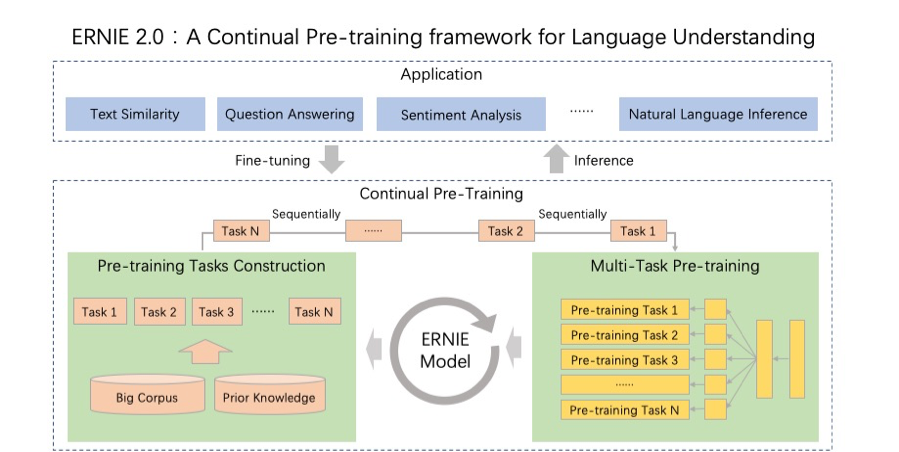

ERNIE is a continual pre-training framework for semantic understanding. By incrementally gaining knowledge through multi-task learning, ERNIE outperforms BERT and XLNet from Google on 16 tasks, including English tasks on GLUE benchmarks and several Chinese tasks.

The framework of ERNIE 2.0

PaddleSeg is an end-to-end image segmentation library supporting everything from data augmentation and modular design to high performance and end-to-end deployment. PaddleDetection has been upgraded with the addition of 60+ easy-to-use object detection models.

Elastic CTR is a newly released solution that provides process documentation for distributed training on Kubernetes (k8s) clusters while serving distributed parameter deployment forecasts as a one-click solution.

Graph & federated & multi-task learning

Well-suited for tasks on unstructured data, graph neural networks are garnering attention from the research community. The newly released Paddle Graph Learning (PGL) framework is designed to support heterogeneous graph learning on both walk-based paradigm and message-passing based paradigm. Now PGL provides 13 mainstream graph learning models.

Another hot topic today is federated learning, in large part due to rising concerns over data privacy. Federated learning delivers a viable solution as a distributed machine learning approach to enable model training on a large corpus of decentralized data. PaddleFL federated learning framework conveniently and quickly supports federated learning and AI privacy algorithm research, realizes the FedAvg algorithm and the differential privacy-based SGD algorithm, and supports distributed, secure shared learning algorithm research.

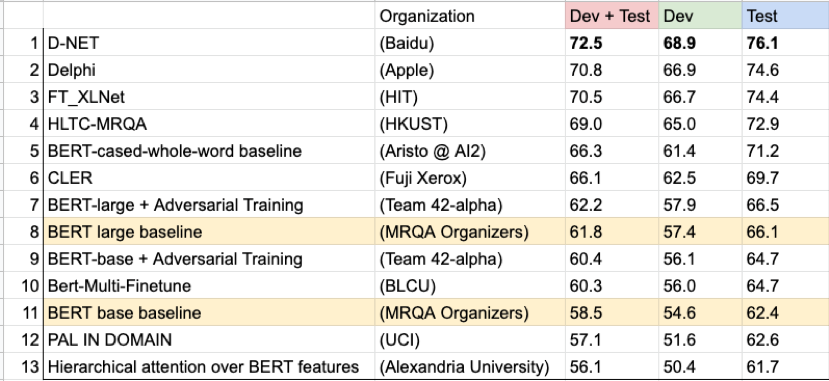

We have also open-sourced the new multi-tasking learning framework, PALM, which won the Machine Reading for Question Answering (MRQA) 2019 championship. Developers can only perform multi-task learning algorithms based on ERNIE, BERT and other pre-trained models with several lines of code.

Baidu’s champion model, D-Net, achieves 72.5 F1 on the 12 held-out datasets.

EasyDL Pro

EasyDL is an AI platform for developers without machine learning expertise to train and build custom models via a drag-and-drop interface. Over 65,000 enterprises have used EasyDL to build 169,000+ models in manufacturing, agriculture, service industries, and more. We are releasing EasyDL Pro, a one-stop AI development platform for algorithm engineers to deploy AI models with fewer lines of code.

Master mode

We are introducing Master mode to help developers better customize models for specific tasks. At the heart of Master mode is a large library of pre-trained models and tools for transfer learning.

New Upgrades

Also released in the new PaddlePaddle is a series of new upgrades:

Support for more operators, API’s upgraded for flexibility and usability, and improved documentation.

PaddleSlim, a PaddlePaddle module for model compression, now has an enhanced quantitative training function and a hardware-based small model search capability.

100+ new models across NLP, CV, speech, and recommendation, including those winning models Baidu developed that have won championships, such as MRQA at EMNLP-IJCNLP 2019, ActivityNet Challenge 2019, Detection in the Wild Challenge 2019 Objects365 Full Track, and CVPR LIP Challenge 2019.

PaddleHub, a toolkit for managing pre-trained models and transfer learning capability, now has an auto fine-tune function for hyper-parameter optimization.

PARL, a high-performance distributed training framework for reinforcement learning (RL), has been upgraded with more parallel RL mechanisms and support for parallel evolutionary algorithms. Our RL team recently won the Best Performance Track of the NeurIPS 2019 Challenge: Learn to Move - Walk Around. The objective is to train a 3D human musculoskeletal model with PaddlePaddle-based RL framework PARL that can move following velocity commands in real-time.

Paddle2ONNX and X2Paddle have been upgraded for improved conversion of trained models from PaddlePaddle to other frameworks.

We have also announced a range of benefits for deep learning developers, including 10 free AI courses, deep learning training programs in 100+ universities, funding to 1000+ companies for intelligent transformations, a series of AI challenges with up to RMB 1 million prizes awarded, and massive GPU computing resources worth RMB 100 million.

PaddlePaddle accelerates AI-driven transformation and change

PaddlePaddle has been laying a solid foundation for digital transformation across industries in China. For example, Chao Fang, chief engineer of Beijing Daheng Image Vision, said at Wave Summit+ 2019 that PaddlePaddle has improved the detection efficiency of their testing equipment by 60 percent. Hao Wu, head of AI and Robotics at Guangdong Power Grid Company, said they have built a set of intelligent power inspection tools using PaddlePaddle to detect and analyze power transmission and transformation equipment, reducing the total time spent from six hours to 15 minutes.

Tian Wu, executive director of Baidu AI Group, said, “By providing hardware support, cloud-to-edge deployment, development kits, and Master mode, we’ve significantly improved PaddlePaddle’s performance and feature-set. In the future, PaddlePaddle will keep advancing large-scale distributed computing and heterogeneous computing, providing the most powerful production platform and infrastructure for developers to accelerate the development of intelligent industries.”

Tian Wu, Executive Director of Baidu AI Group

Read more on the PaddlePaddle GitHub for more details.