2020-06-26

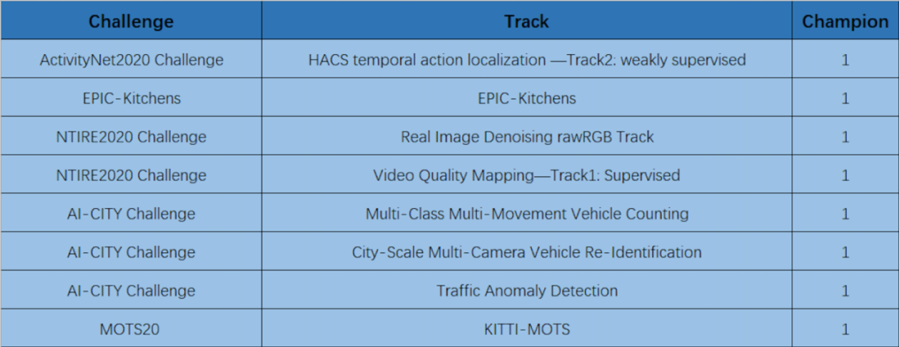

Back to listThis year, Baidu secured championships at eight challenge tracks and hosted two workshops, in addition to its 22 research papers accepted at the virtual Computer Vision and Pattern Recognition Conference (CVPR) 2020. CVPR 2020, the premier annual computer vision event, concluded last Friday.

You can learn more about the research works we presented below.

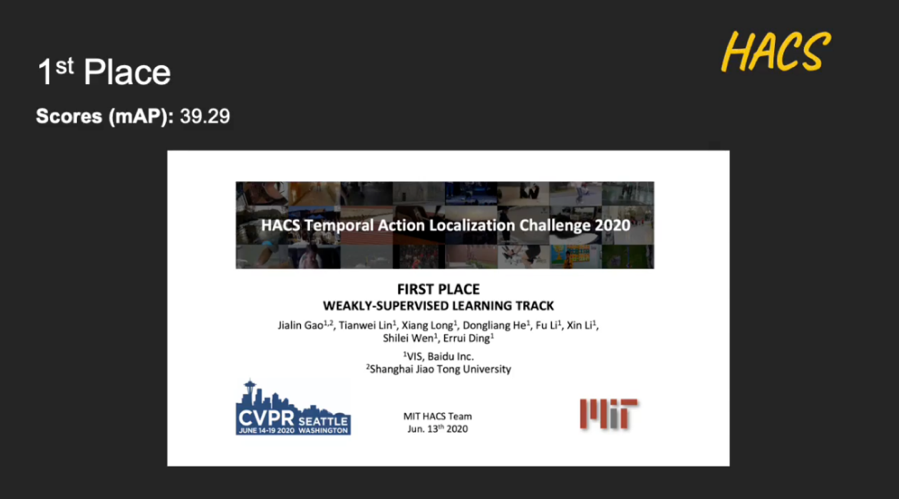

ActivityNet2020 Challenge

ActivityNet Challenge, which was first hosted during CVPR 2016, focuses on recognizing daily life, high-level and goal-oriented activities from user-generated videos as those found in internet video portals. Its sub-track HACS Temporal Action Localization Challenge 2020 is intended to temporally localize actions in untrimmed videos.

Baidu won first place with a mAP score of 39.29. In a joint effort with Shanghai Jiao Tong University, our researchers proposed a multi-modal fusion network based on relation-aware pyramid network for temporal action localization. The technology promises great potential in video analysis such as video highlight detection.

Learn more at http://hacs.csail.mit.edu/challenge.html

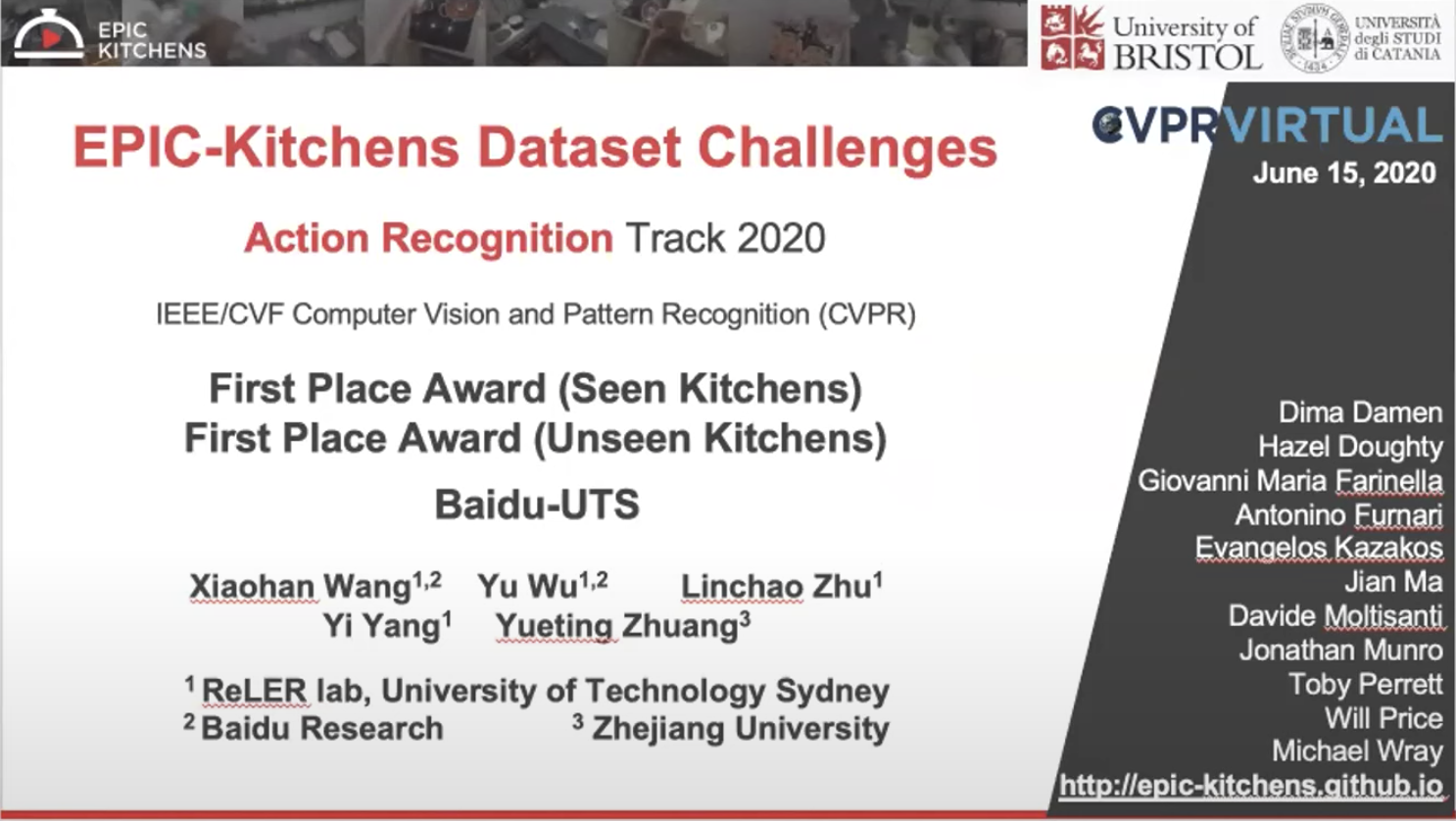

EPIC-Kitchens 2020

EPIC-Kitchens is the largest dataset in first-person (egocentric) vision with multi-faceted non-scripted recordings in native environments. For example, in the wearer's homes, it captures all daily activities in the kitchen over multiple days. Annotations are collected using a novel live audio commentary approach.

In the Action-Recognition Track, Baidu won first place at both seen kitchens and unseen kitchens test datasets. Our researchers teamed up with the University of Technology Sydney and Zhejiang University to propose symbiotic attention for egocentric action recognition, which significantly improves the performance of 3D convolutional neural networks.

Video presentation available at https://www.youtube.com/watch?v=H3nepU4b4YU&feature=emb_logo (starting at 15:00)

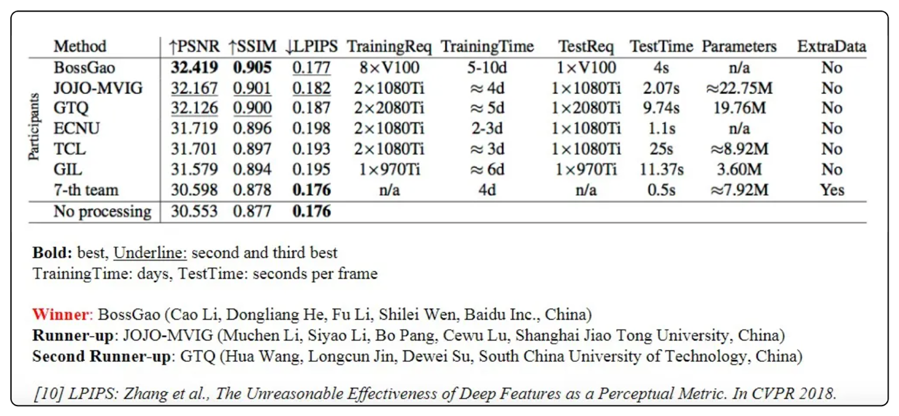

NTIRE 2020

The NTIRE: New Trends in Image Restoration and Enhancement workshop aims to provide an overview of the new trends and advances in image restoration, enhancement and manipulation (restoration of degraded image content, the filling in of missing information, or the needed transformation and/or manipulation to achieve a desired target).

Baidu won the Real Image Denoising Challenge – rawRGB, which aimed to obtain a denoised image in sensor/camera-specific raw RGB color space (rawRGB). Our researchers proposed a neural architecture search (NAS) based dense residual network for image denoising.

Learn more at https://competitions.codalab.org/competitions/22230#learn_the_details

Another challenge where Baidu secured first place was in video quality mapping -supervised, which aimed to obtain a solution capable of producing video results with the best perceptual quality and similarity to the target video domain samples. Our researchers merged the existing EDVR model with DenseNet using DenseNet to extract image features from videos and the EDVR model to enable information exchange and alignment between video frames.

Learn more at https://competitions.codalab.org/competitions/20247#learn_the_details

AI City 2020

The fourth edition of the AI City Workshop at CVPR 2020 focused on intelligent traffic system problems such as signal timing planning and multi-camera vehicle tracking. Among 315 participating teams, Baidu stood out from competitions and won three out of four challenge tracks.

The Multi-Class Multi-Movement Vehicle Counting challenge required participating teams to count four-wheel vehicles and freight trucks that followed pre-defined movements from multiple camera scenes. Our researchers achieved first place by using a proposed detection-tracking-counting framework for movement-specific vehicle counting.

Video presentation available at https://drive.google.com/file/d/10JMNVtu_HlJ_vl4RrvzvS_HRH2QjFGge/view

The City-Scale Multi-Camera Vehicle Re-Identification challenge required vehicle re-identification based on vehicle crops from multiple cameras placed at multiple intersections. In a joint effort with the University of Technology Sydney, our researchers topped the challenge with a mAP score of 84.13%, proposing a robust visual representation for vehicle re-identification along with different data generation techniques to solve the problem.

Video presentation available athttps://drive.google.com/file/d/1RtE9_D9QnCWrCj3NFOmzMHuQh08Vb0dA/view

The Traffic Anomaly Detection challenge required participating teams to submit up to 100 detected anomalies, including wrong turns, wrong driving direction, lane change errors, and all other anomalies. Teamed up with Sun Yat-sen University, our researchers won the challenge with an F1 score of 98.5% using a home-grown multi-granularity tracking framework, which contains a box-level tracking branch and a pixel-level tracking branch.

Video presentation available at https://drive.google.com/file/d/1qTHEwHalWbe34PTfuEKQUVispyGzHEAl/view?usp=sharing

MOTS 2020

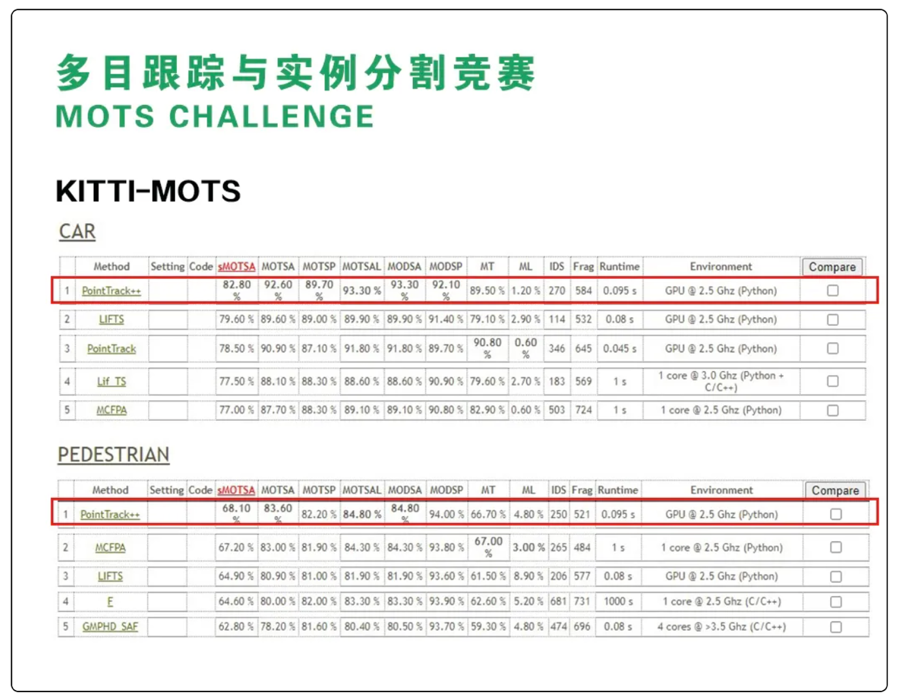

The 5th edition of Multi-Target Tracking (MOTChallenge) Workshop aimed to push the limits of tracking by focusing on tracking at pixel-accurate level. Baidu won the KITTI-MOTS challenge, a multi-object tracking and segmentation task for road traffic scenarios on the KITTI MOTS dataset (cars and pedestrians). Our researchers teamed up with University of Science and Technology of China to propose PointTrack++ for effective online multi-object tracking and segmentation.

Learn more at http://www.cvlibs.net/datasets/kitti/eval_mots.php

Baidu also hosted the Learning from Imperfect Data (LID) Workshop to discuss the latest advances in the weakly supervised learning for real-world computer vision applications. We also hosted the CVPR 2020 Workshop on Media Forensics, which explored trends on all fronts of media forensics, including the detection of manipulations, biometric implications, misrepresentation, spoofing, and more.

Learn more about LID Workshop at https://lidchallenge.github.io/

Workshop on Media Forensics at https://sites.google.com/view/wmediaforensics2020/home