2021-01-26

Back to listMost innovations in natural language processing, a data intensive technology, are spawned in high-resource languages like English and Chinese, leaving thousands of low-resource languages behind. While training a model on each individual language is plausible, multilingual model research is an alternative course of action that has seen significant improvements over the past few years. It is indicative of a future where one model can understand all languages, allowing people of differing linguistic backgrounds to communicate and exchange information with no barriers.

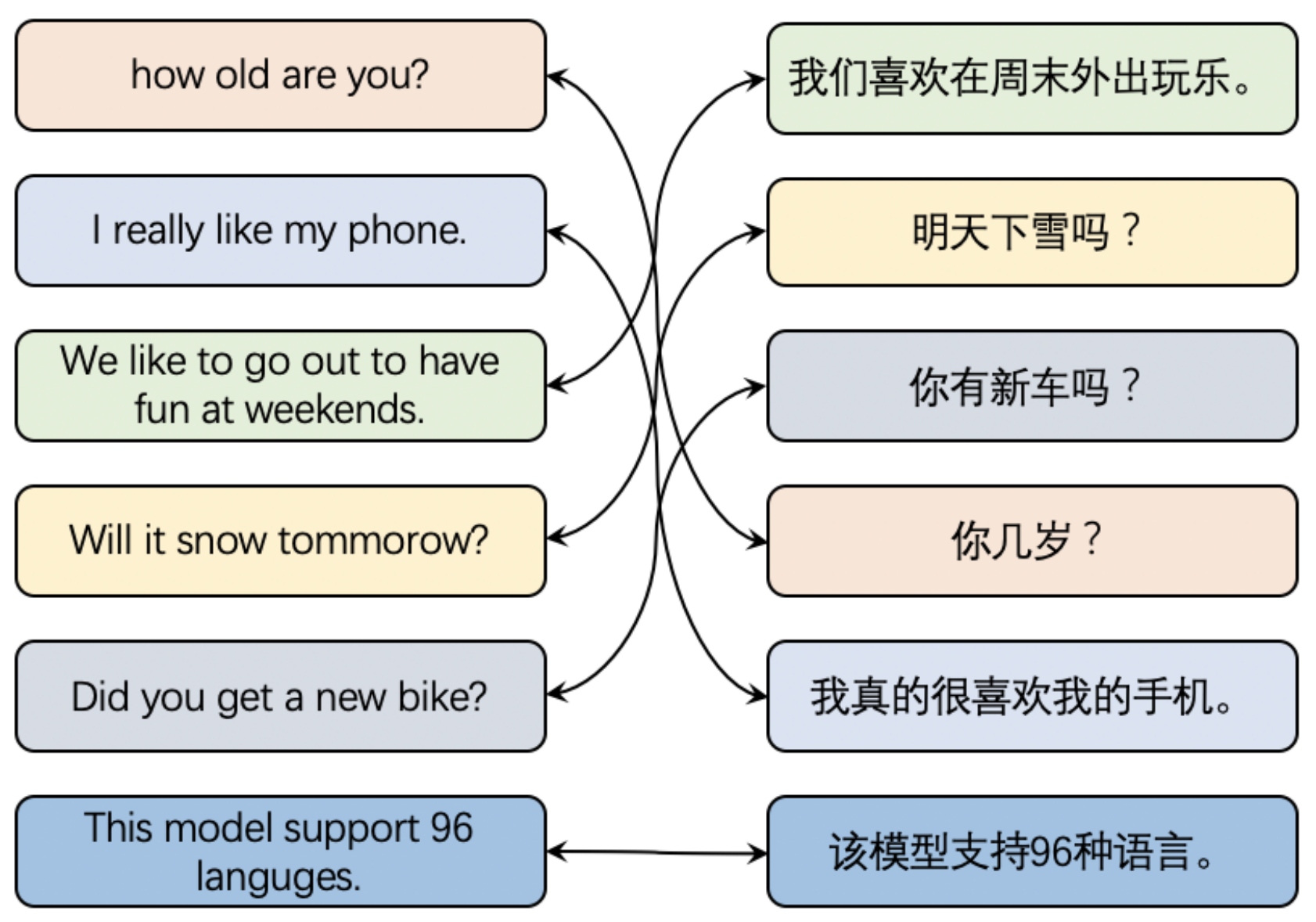

We are excited to present ERNIE-M, a new multilingual model that can understand 96 languages, and a new training method that can improve the cross-lingual transferability of the model, even on data-sparse languages. Experimental results show that ERNIE-M delivers new state-of-the-art results in five cross-lingual downstream tasks and tops XTREME, a substantial multilingual multi-task benchmark proposed by Google, Carnegie Mellon University, and DeepMind. The codes and pre-trained models will be made publicly available soon.

Multilingual Language Model Gains Research Attention

Most modern intelligent systems such as search engines, customer service chatbots, and smart speakers are built upon voluminous labeled monolingual data. Unfortunately, more than 6,500 languages that are currently spoken around the world have scarce data resources, presenting a huge challenge for machines to understand those languages and thereby limiting the democratization of AI.

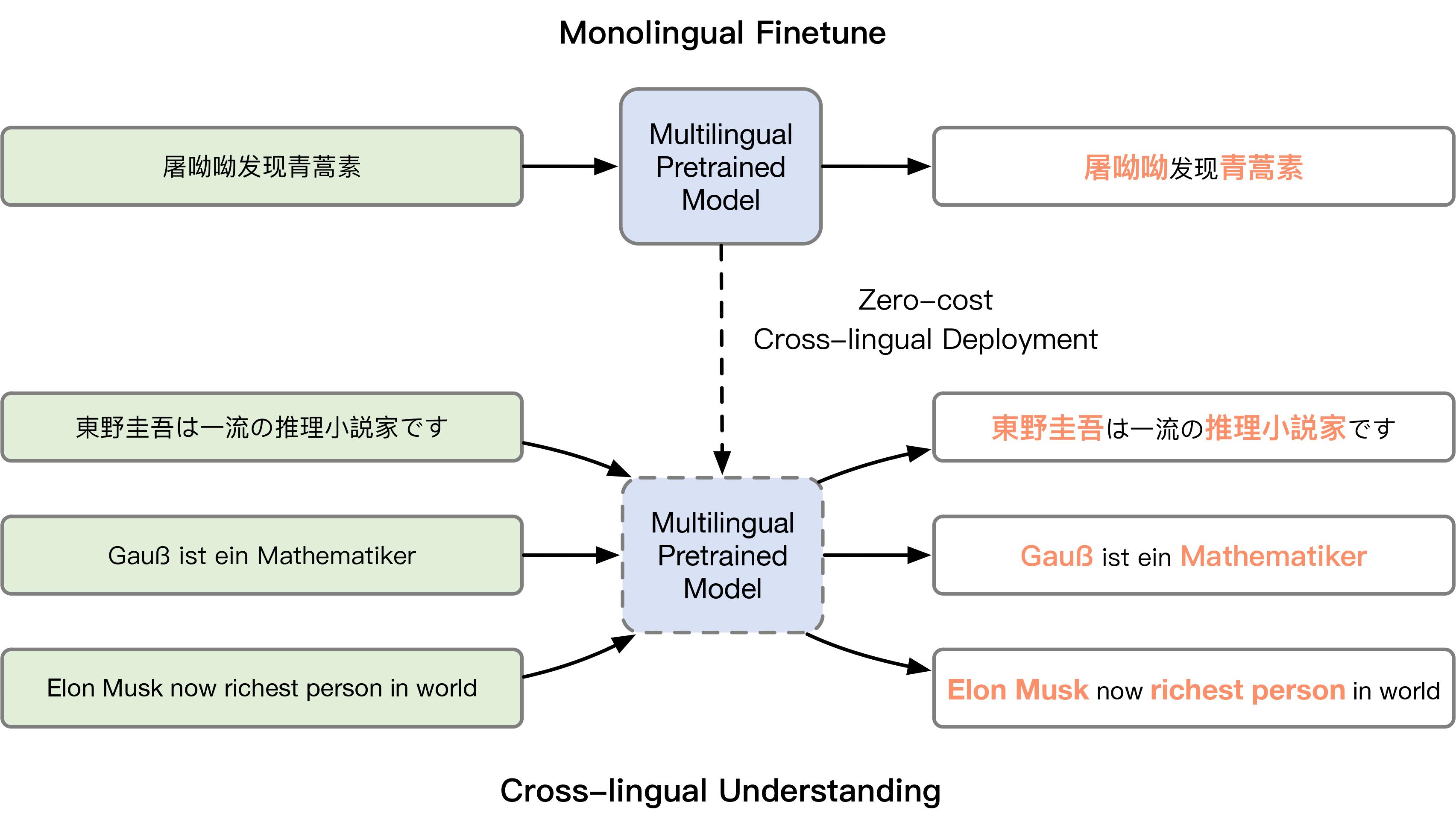

In recent years, unified multilingual model research has gained attention after studies demonstrated that the pretraining of cross-lingual language models can significantly improve their performance in cross-lingual NLP tasks. Cross-lingual models learn a shared language-agnostic representation across multiple languages and enable transfer learning from a high-resource language to a low-resource language.

The existing method, which has been proven to be effective, is to train a model on different monolingual datasets to learn semantic representation, and capture semantic alignment across different languages on parallel corpora. However, the sizes of parallel corpora are rather limited, which restricts the model’s performance.

In the paper “ERNIE-M: Enhanced Multilingual Representation by Aligning Cross-lingual Semantics with Monolingual Corpora”, we proposed a novel cross-lingual pre-training method that can learn semantic alignment across multiple languages on monolingual corpora.

The Key of ERNIE-M: Cross-lingual Alignment and Back-translation

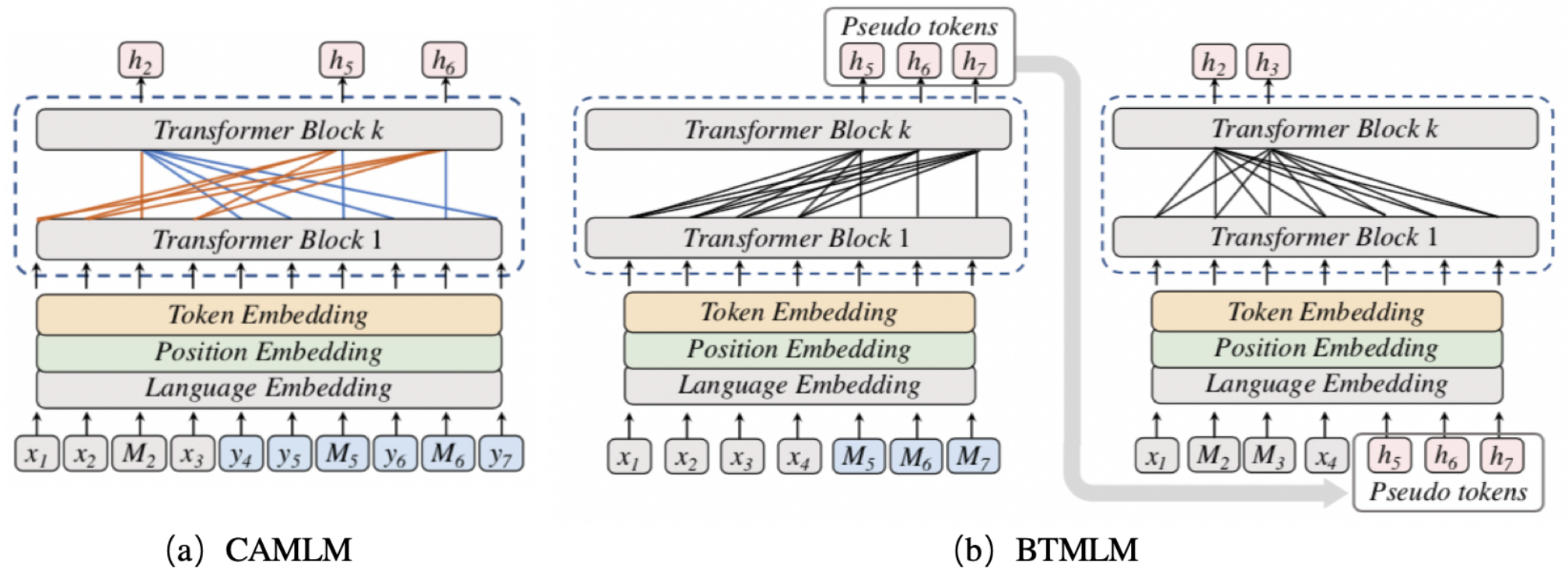

The training of ERNIE-M consists of two stages: The first stage is to align the cross-lingual semantic representation using Cross-attention Masked Language Modeling (CAMLM) on a small parallel corpus. We denote a parallel sentence pair as. In CAMLM, we learn the multilingual semantic representation by restoring the MASK tokens in the input sentences.

For example, given an parallel CH-EN sentence pair input as <明天会 [MASK][MASK] 吗,Will it be sunny tomorrow>, the model has to uncover the MASK token <天晴> in the source sentence by solely relying on the meaning of the target sentence, thus learning the semantic representation between the two languages.

The second stage is Back-translation Masked Language Modeling (BTMLM) to align cross-lingual semantics with the monolingual corpus. Specifically, we use BTMLM to train our model, which is built on the transferability learned through CAMLM, to generate pseudo-parallel sentences from the monolingual sentences. The generated pairs are then used as the input of the model to further align the cross-lingual semantics, thus enhancing the multilingual representation.

For example, given a single sentence input <我真的很喜欢吃苹果>, the model trained with CAMLM will synthesize a pseudo-parallel sentence pair as <我真的很喜欢吃苹果, eat apples>. The model is then tasked to uncover the MASK token from the following input as <我真的很喜欢吃[MASK][MASK]>, eat apples>.

ERNIE-M is trained with monolingual and parallel corpora consisting of approximately 1.5 trillion characters across 96 languages including Chinese, English, French, Afrikaans, Albanian, Amharic, Sanskrit, Arabic, Armenian, Assamese, Azerbaijani and more.

Experimental Results

We utilized five cross-lingual evaluation benchmarks to test the efficacy of ERNIE-M:

lXNLI for cross-lingual natural language inference,

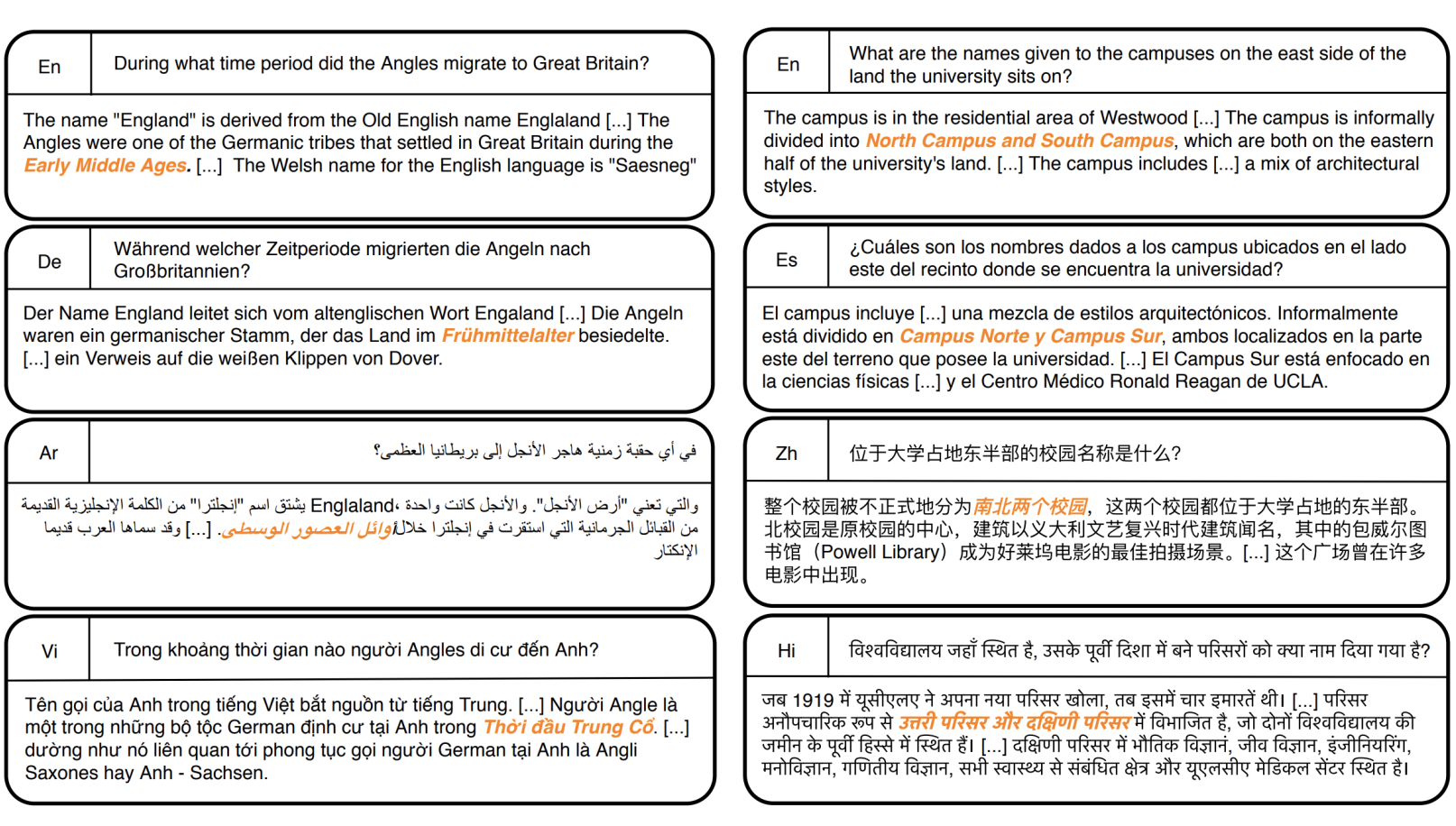

lMLQA for cross-lingual question answering,

lCoNLL for cross-lingual named entity recognition,

lPAWS-X for cross-lingual paraphrase identification,

lTatoeba for cross-lingual retrieval.

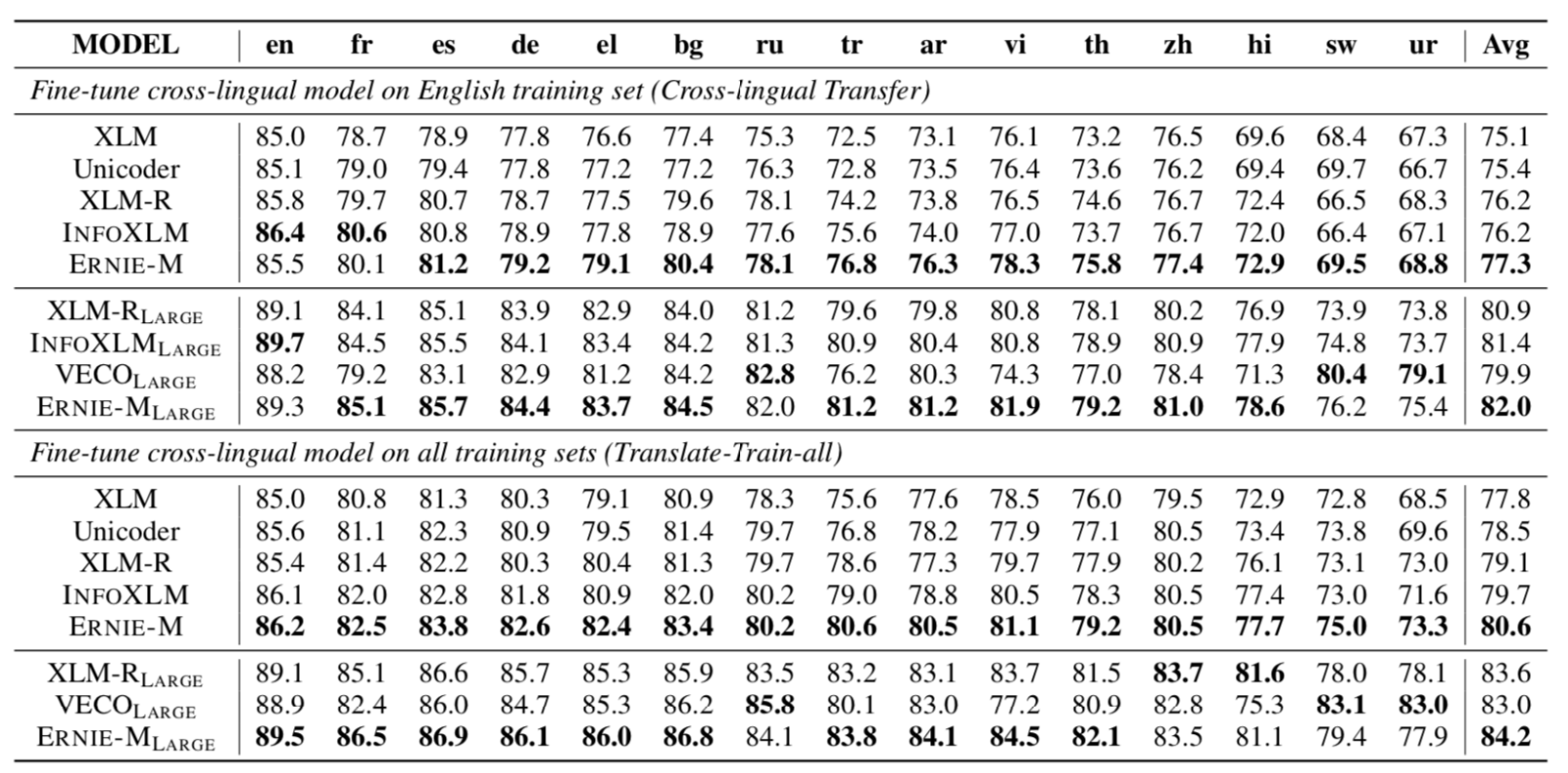

We evaluated ERNIE-M in two formats: a cross-lingual transfer setting to fine-tune the model with an English training set and evaluate it on a foreign language test, and a Multi-lingual fine-tuning setting to refine the model on the concatenation of all other languages and evaluate it on each language test set.

Cross-lingual Sentence Retrieval: The goal of this task is to extract parallel sentences from bilingual corpora. ERNIE-M allows users to retrieve results in multiple languages , such as English, French, and German, using only Chinese. This technology can bridge the gap between information expressed in different languages and help people search for more valuable information. ERNIE-M achieved an accuracy rate of 87.9% when tested on a subset of the Tatoeba dataset that contains 36 languages.

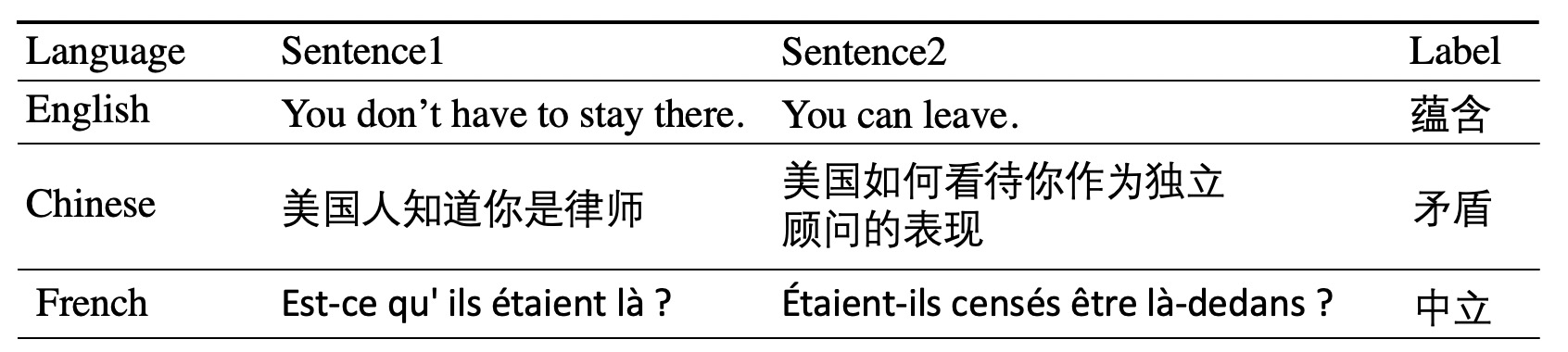

Cross-lingual Natural Language Inference: Cross-lingual natural language inference is a task to determine whether the relationship between two input sentences is entailment, contradiction, or neural. ERNIE-M achieved an average accuracy of 82.0% on the English training set and 84.2% on all training sets.

Cross-lingual Question Answering: Question answering is a classic NLP task that tests the machine’s ability to automatically answer questions in a natural language. We used the multilingual question answering (MLQA) dataset, which has the same format as SQuAD v1.1 but contains seven languages. We fine-tuned ERNIE-M by training with English data. The model achieved an average accuracy of 55.3%.

Named entity recognition task: Named entity recognition (NER), a subtask of information extraction, seeks to locate and classify named entities in text. We evaluated ERNIE-M on CoNLL-2002 and CoNLL-2003 datasets through a cross-lingual NER task involving English, Dutch, Spanish and German. We fine-tuned ERNIE-M on English data and evaluated it on English, Dutch, Spanish, and German. The average F1 score was 81.6%. On all training sets, the average F1 score was improved to 90.8%.

Cross-lingual paraphrase identification: Paraphrase identification is to determine whether two texts have the same/similar meanings. We evaluated ERNIE-M on PAWS-X, a paraphrase identification dataset with seven languages . ERNIE-M achieved an average accuracy of 89.5% on the English training set and 91.8% on all training sets.

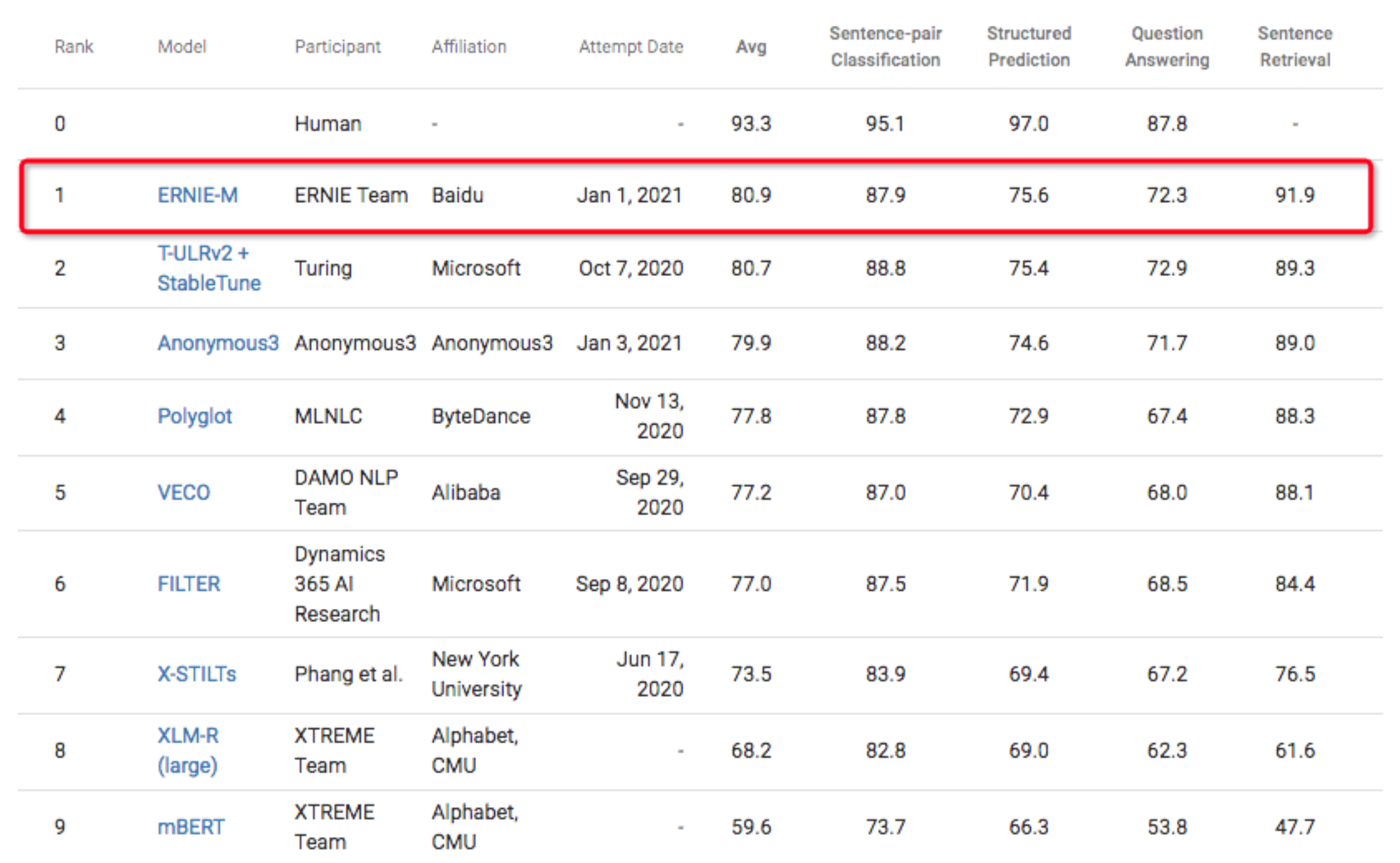

Furthermore, we evaluated ERNIE-M on XTREME, a substantial multilingual multi-task benchmark that covers 40 typologically diverse languages across 12 language families and includes nine tasks that collectively require inference about different levels of syntax or semantics. ERNIE-M scored 80.9, a new record score that tops the leaderboard.

ERNIE-M has wide ranging applications and implications. For example, with this technology we can apply our Chinese-based AI system to other languages and improve our products and services for global users. In addition, ERNIE-M is expected to assist linguists and archaeologists to understand languages that are considered endangered or lost.